From a Digital Perspective: Why the Red Wave Wasn't and Why Pundits and Pollsters Missed the Blue Wall that Stopped It

And Something Interesting About Elon Musk and Twitter -- By Alan Rosenblatt, Ph.D., Craig Johnson, Andrea Haverdink, and Nicolai Haddal

For months, the media told us that Democrats would get wiped out in the 2022 midterm elections, and that Republicans would take control of both houses of Congress. But when all was said and done, the “Red Wave” turned out to be more like a “Red Puddle.” Maybe Democrats stepped in the puddle and got our shoes a little wet, but there was certainly nothing resembling the tsunami Fox News crowed about for weeks. In the end, Democrats held the Senate and lost the House by only the slimmest of margins.

How could the pundits get it so wrong?

Many pundits will say that it was the pollsters who got it wrong, and that they were simply reporting what they saw in the polls. This, however, appears to be only a small and more nuanced part of the larger story. In fact, we found strong evidence in our research to suggest that opinion polling that fails to include an analysis of the online conversation is fundamentally doomed to miss important information about what voters think about politics, policy, and candidates. Our analysis reveals that the pundits were simply not systematically paying attention to the online conversation. This is how they were able to miss all the signals of an evaporating wave and the construction of a “Blue Wall.”

What happened with opinion polls?

By now, several news stories have uncovered the way Republican polling firms “flooded the zone” with survey results that leaned heavily on unjustified GOP-friendly likely voter models. Those polls were then incorporated into poll-averaging services which skewed mainstream media presentations artificially towards a Republican “Red Wave.” Above all, most of the pundits and reporters tasked with doing some election analysis in the months leading up to Election Day are not adequately trained in survey research methodology and don’t truly understand how to interpret the polls. (Don’t even get us started on the methodological faux-pas of aggregating opinion polls which employ different sampling processes and likely voter filters.)

Using sample-based telephone surveys to understand public opinion has been a methodology on the verge of collapse for many years. Even if pollsters could somehow develop a flawless set of assumptions to filter for likely voters, response rates to surveys are so low that the ability to use sample statistics to estimate opinions in the population could fail at any time.

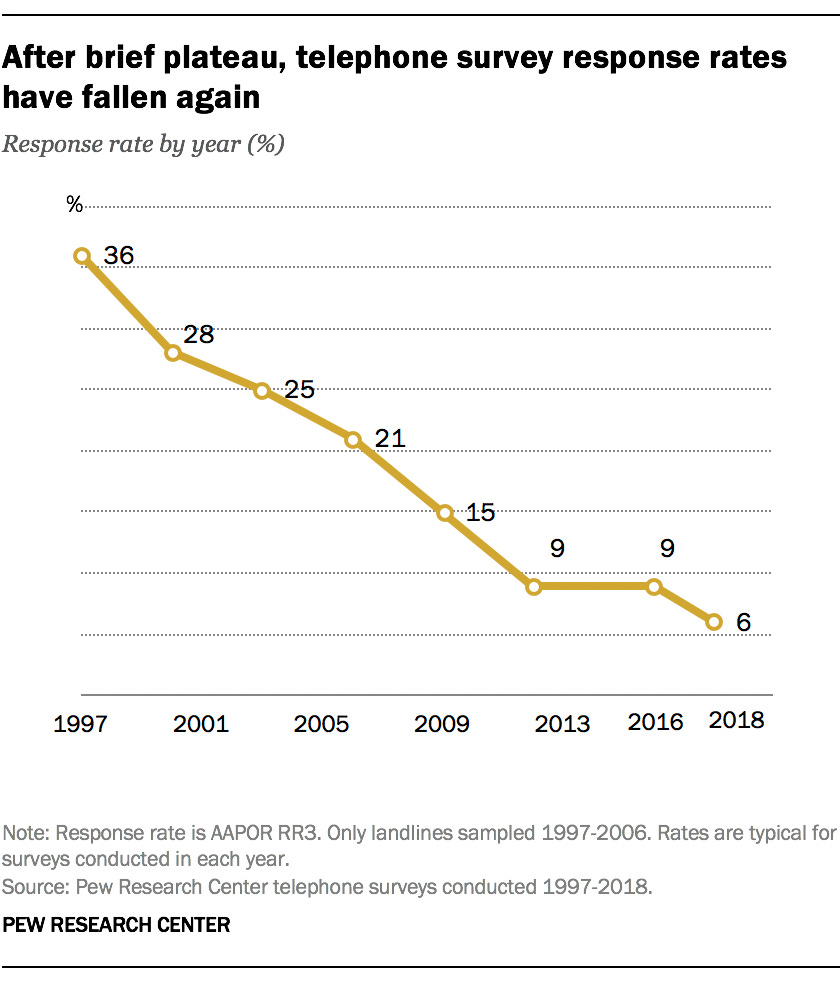

In the past, pollsters maintained that at least a 12% response rate was required to ensure a probability sample that can apply the “Central Limits Theorem” to estimate public opinion statistics for the population. But according to Pew Research, telephone survey response rates dropped below a 12% response rate more than a decade ago. (See Fig. 1)

This decline is partly due to the caller-ID on mobile phones that has allowed multiple generations to grow up preferring to screen their calls. The likelihood that a Millennial or “Zoomer” would answer an unknown or “spam” caller from a pollster is substantially lower than other generations, leaving them chronically under-represented in survey samples.

Figure 1. Declining Telephone Survey Response Rates

As a result, pollsters are venturing online to recruit samples, with reasonable success. There are some online sampling methods that are arguably as accurate as telephone probability samples. But what our analysis shows is that recruiting survey takers online is fundamentally not the same thing as analyzing what people are actually saying online. Nor is it as effective on its own as it would be combined with content analysis of the online conversation.

Another factor behind opinion polls missing out on surveying large chunks of the electorate is that, as is well-known among pollsters, MAGA Republicans are likely to hang up if they sense that a poll pursues an agenda that is critical of Trump.

Additionally, opinion polls only ask questions the researchers think to ask. If the public is thinking or speaking about a topic in a completely different frame than that used by pollsters, the poll will inevitably miss vital information about public opinion. Focus groups can mitigate this problem, but they are inevitably based on smaller, limited samples and are still an obtrusive measurement. In the end, the right questions in the right language are less likely to be asked in surveys.

The online conversation matters

For these reasons, it is imperative that comprehensive public opinion research include a thorough, data-driven analysis of what people are saying online. Voters leave their opinions, language, and priorities for all to see across social media and news site comment sections. And unlike survey research, or even focus groups, these opinions can be measured unobtrusively.

The most commonly used methods for measuring public opinion—surveys and focus groups—are, on the other hand, inherently obtrusive: the subjects know that they are being studied. This introduces a bias sometimes referred to as the “Hawthorne Effect,” in which a subject’s actions are affected or changed by their perception of being observed by a researcher.

In contrast, analyzing what people are already saying on social media is unobtrusive, since not only are people unaware that their opinions are being studied, but we can also analyze exhaustive historical content over time. Thus, in many ways, online content is more reflective of what people actually think.

Analyzing online content is also a good way to ensure the inclusion of segments of the population that are less likely to answer a survey researcher’s phone call. Young voters and MAGA voters alike post their thoughts all over the internet.

The online conversation matters not only because it can measure difficult to sample segments of the population, but because it can reveal what frames and language the voters actually use when they talk about issues and candidates. And as any Communications 101 course will teach you, if you are not talking in a way that connects with your audience you are talking past them.

In a way, we buried the lede. Knowing how your audience talks about your issues and candidates is the most important thing we can learn from opinion research. For candidates, this research is needed to drive campaign messaging. For pundits, it’s needed to inform campaign reporting and prognosticating.

In a recent blog post, we outlined our findings on the way people talk online about the Child Tax Credit and Social Security. Interestingly, the way real people online spoke about those issues were very different from the way advocacy organizations and politicians spoke about them. Real people online were posting about how devastating the loss of these programs would be to the income of themselves and their families. Meanwhile, organizations and politicians were touting that these programs were lifting children and seniors out of poverty. And while those were great outcomes of the program, they were not connecting with people quite as viscerally as the impact that losing these programs would have on their monthly budget.

When we applied these findings to our clients’ social media messaging, we saw substantial increases in both reach and engagement. As it turns out, listening to the online conversation and learning how those online are talking about a topic can help you more effectively engage in civic and campaign discourse.

Who was really talking with the voters?

To better understand what voters are saying about issues and candidates online, Unfiltered.Media developed technology that uses machine learning and other word parsing processes to sort social media posts based on the position they take and the phrases they use to state those positions. Unlike sentiment analysis, which simply looks at the relative use of positive and negative words and phrases, our technology assesses the position expressed in the post, regardless of the occurrence of positive or negative words. It recognizes irony and sarcasm, as well as the use of negative quotes by one person or group to make a positive statement about another (and vice versa). Once these posts are sorted by position, we can analyze the words and phrases people use to express those opinions in every camp.

Applying this technology, we developed a general political model to assess the Twitter conversation about politics in the period between Labor Day and Election Day 2022. First we trained the computer to recognize how seven distinct poles in the political space talk about the issues:

Left Activists

Right Activists

Left Organizations

Right Organizations

Democratic Politicians

Republican Politicians

Never-Trumpers

We then analyzed a probability sample of 845,000 tweets posted from September 6 through November 8, 2022 to see which of the seven poles were most in sync with how the general public talked about the election.

Figure 2. Affinity of General Political Conversation on Twitter to Seven Influencer Poles (September 6 - November 10, 2022)

Based on our analysis, from Labor Day until about a week before the election (and the day billionaire Elon Musk took control of Twitter), Left Activists dominated the affinity share of voice. This means that at any time we looked at the data, nearly half of users we analyzed were talking about candidates and issues the way Left Activists do. This is a new indicator for enthusiasm that traditional polling and pundits relying on it were missing. More specifically, this online conversation was the advantage— the“Blue Wall”—that Democrats built for the midterm elections which traditional media and pollsters missed when evaluating their likely voter models. Essentially, the traditional issues that normally plague an incumbent party were erased, or minimized. Republican conversations online about their key issues—inflation, crime, gas prices, etc.—were effectively undermined because Left Activists helped drive those conversations to a frame that was more favorable to Democrats. For example, if the online conversation around inflation is focused on government spending, Democrats are likely to lose. But if the conversation around inflation is instead shifted to focus on corporate greed, the conversation and the election become much more even.

Figure 3 shows how Left Activists were more in sync with the general population. In this ranked-choice analysis, a Never-Trump tagged post was likely to have Left-Activists as its second highest probability for language affinity. That means that even Never-Trumpers tended to speak more like Left Activists than Right Politicians, or even Right Activists.

Figure 3. Rank Choice Analysis of General Political Conversation on Twitter to Seven Influencer Poles (September 6 - November 10, 2022)

One of the biggest advantages of this analysis is that we are also able to see past the metric of “reach,” which is exaggerated by accounts with large followings, in order to look at participation and frequency of mentions, which is a truer measure of engagement and enthusiasm with a topic of conversation.

Consider the most common N-Grams, or most common X-number word phrases (Table 1) found in our sample. The top 30 most common 2-, 3-, and 4-word phrases amongst the 845,000+ tweets we analyzed reinforces our findings that the overall focus of the Twitter/national conversation benefited Democrats (read the full list here). For example, “Vote Blue” was mentioned more often than “Vote Republican.” “Donald Trump” and “Social Security” were the top two most mentioned topics, both of which turned out to be favorable to Democrats. We can see that the context of the Social Security conversation was more conservative before Left Activists launched efforts to retake the narrative because the three-word phrase “Cut Social Security” also scored among the top phrases. And based on exit polling, it’s clear that any conversation about Donald J. Trump was more likely a drag on Republicans and a motivator for Democrats.

You can also see that while the Republican “gas prices” talking point regarding inflation was a common phrase within the conversation, contextualizing, pro-Democratic position phrases within that conversation, like “climate change” “Roe v. Wade,” and even the “Inflation Reduction Act,” were not far behind, and combined to form a more prevalent share of the inflation conversation.

Table 1. Top 2-, 3-, and 4-Word Phrases (N-Grams) Among General Political Tweets (September 6 - November 10, 2022)

We saw hints of this elsewhere

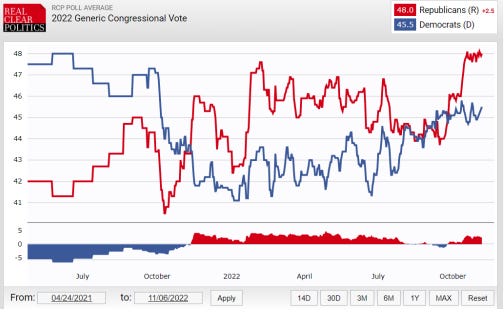

The flip in the conversation affinity on Twitter from Left Activist-dominated to Right Activist-dominated in the days after Elon Musk’s October 27 Twitter takeover maps closely with the shift in the generic Congressional poll in the last week before the elections. Around the same time Musk took over at Twitter, the conversation share shifted by 10 points in a single day, and the Republican advantage in the “generic ballot” shifted suddenly toward Republicans in an improbably large jump. Explaining this shift in the generic ballot without considering the dramatic shift occurring in the Twitter conversation would simply miss the point.

Figure 4. 2022 Generic Congressional Vote

Taken as a whole

Without incorporating a deep analysis of what people were saying online, in terms of both content and share of the conversation, predictions about elections now and for the foreseeable future will fall flat. Yes, polling and focus groups are an essential set of tools for predicting and understanding elections, but they are no longer adequate by themselves. The online conversation captures the views of people underrepresented in opinion polls, side-steps issues related to obtrusive observation error, and cannot deliver the fine-tooth analysis of language that we get by analyzing large amounts of conversation data, drawn from each day across a substantial period of time.

When we measured the online conversation, we found that the Blue Wall was holding off the Red Wave. We even found that the Blue Wall built by Left Activists is under assault since Elon Musk took control of Twitter.

There is much still to unpack about the effect Musk is having on Twitter. Initial data shows the right rising relative to the left, but it is too soon to tell if that shift will continue. The data we do have suggests that the conversation on social media, at this moment in time, matters if we are going to accurately understand the electoral landscape.